This whitepaper covers our tensor programming model, benchmarks, and extensibility to custom hardware. Models run directly on encrypted data.

After two years of research and engineering, we are releasing Lattica's first technical white paper: a detailed look at how we've made Fully Homomorphic Encryption (FHE) practical for real-world AI inference.

What's Inside

Architecture and Performance

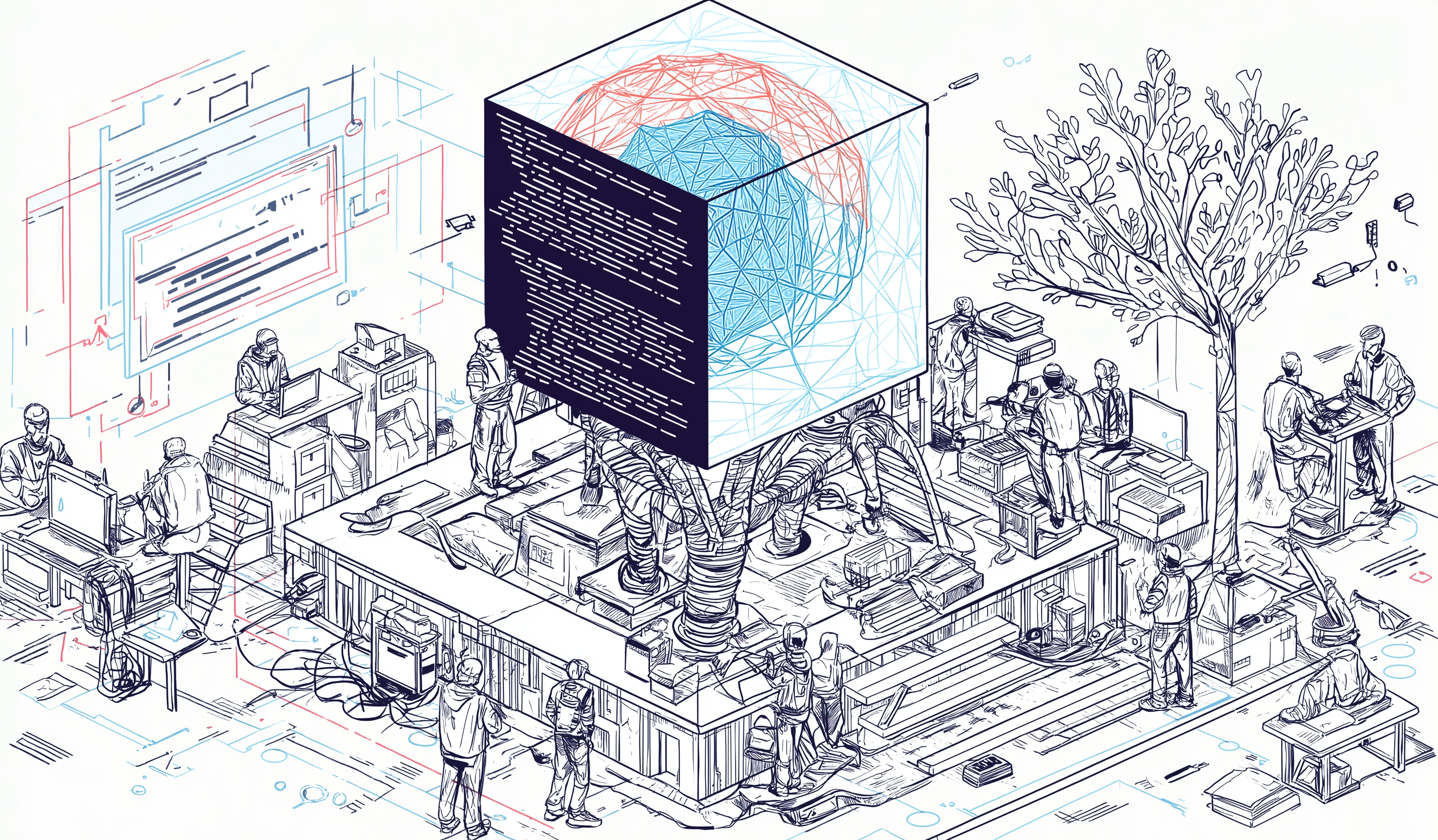

How we re-engineered FHE to run efficiently on GPUs (and beyond), and built a layered system from CUDA kernels to PyTorch bindings.

Usability in Practice

Model adaptation that makes standard ML models FHE-compatible, and a lightweight client optimized for edge devices (CPUs and browsers).

Portability and Extensibility

Our Homomorphic Encryption Abstraction Layer (HEAL), enabling integration with GPUs, TPUs, and future custom hardware.

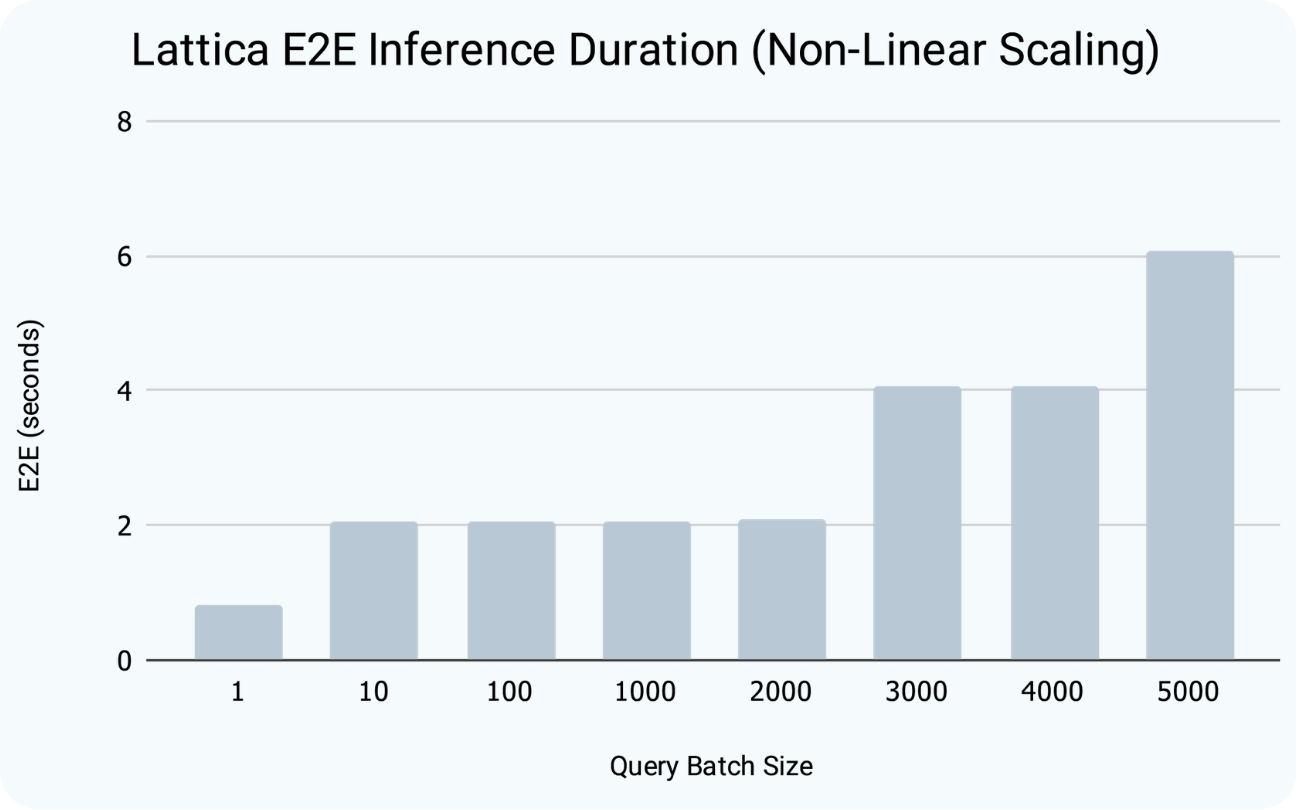

Encrypted Inference that Runs in Seconds, Not Hours.

Encrypted inference is no longer confined to academic benchmarks, it's now deployable, performant, and economically viable. Explore the white paper and join us in bringing privacy-preserving AI into real-world use cases.